Every business has workflows or pipelines it must maintain to keep operations running. Manufacturing companies consume raw materials to create finished products and in turn the sales department pipelines the products out the door to its customers. Internet tech startups aren’t any different. From hiring, lead generation, data onboarding, and sales pipelines all the way down to the software release management just like a brick-and-mortar manufacturing company. Every company produces some sort of good or service and can be broken down into a series of workflows.

People play an incredibly large part in every company workflow; however us humans can be costly and hard to manage which creates a challenge when scaling horizontally. A human can only remember so much and without machines it becomes impossible to grow effectively. With the advent of commodity software in our workflows we can get multiples of scale with the use of less human intervention. Another way to look at it is make the humans you have on staff more efficient.

Software is definitely the answer to hit multiples of scale but on the flip side outsourced human labor has also become a commodity due to services like Amazon’s mTurk to labor on oDesk and eLance. We’ve even successfully hired dozens of folks locally for over a year from Craigslist when we really need to maintain a high degree of data integrity and skill. One could easily achieve another level of scale by just hiring more people.

The big question really is where is the line between human intervention and software automation? The engineer in me immediately screams, “software!†but in reality this is the wrong way to begin to look at the problem. If you’re automating something of a known quantity like a grocery store then go get yourself some point-of-sale system and call it a day. Software is clearly your answer and you can stop reading now. When you’re a fast paced startup and need to pivot quickly you wouldn’t architect an entire software stack around an ever-changing business model. You will spend more time and money rewriting code as your business adapts rather than having a human do the job from the beginning.

This is exactly how UPS scaled their business. They would use humans extensively and slowly automate different pieces of their jobs. One script after another would be written until finally they would string them all together into a pipeline of software. They did this for the same reason we do it; they were in uncharted waters. There’s no reason to double down on engineering that is 10x more expensive than much cheaper labor that can adapt quicker than machines.

Discovering Inefficiencies

Unfortunately every business is different and identifying scaling problems takes proper vision and knowledge of the business. If you’re reading this article then you probably understand all this so I will keep this section brief. To me, there’s one rule you have to follow that will allow all others to build upon it; “that which is measured is improvedâ€. You cannot even begin to optimize until you find the highest level of return or biggest problem area to focus on. Sure you can blindly go into different departments to help them optimize but how do you decide where to start?

The analogy I like to think of is diagnosing car troubles. If your water temperature gauge doesn’t work but your voltage gauge does and you solve your electrical issues your car still may overheat down the road. In reality, you can fix the battery issues later as long as the car starts but the highest value of return is fixing the overheating issues immediately.

Solving Inefficiencies

Taking a scientific approach to solving these problems is the most financially prudent and effective way to find scaling solutions. There are a few ways we have gone about doing this. The easiest (also least likely) way is to have the humans tell the engineers what they spend their day doing. This helps the engineers keep their heads down on other projects without directly getting involved in the nitty-gritty.

Unfortunately, not many non-engineers are able to actually explain what it is they do all day long in enough detail to replicate. We’ve had mixed success but our staff is learning how to distill their use cases down more and more. In order to help them discover inefficiencies we have one golden rule; if you find yourself doing the same task for more than a few hours a day tell your boss or the engineers immediately!

If we step back even further and look at the bigger picture what I am really saying is question your job! Don’t just do something because you are told to do so. Many folks have a hard time with this concept and want to please their boss but in reality what we are after is scale. The only way to get to the next level of scale is to be introspective.

The other way we find inefficiencies is to send in the engineers. We have successfully achieved multiples of scale by having engineers shadow employees that have highly repeatable workflows. In some cases, we have even removed the human from the task for a few weeks while the engineer fills in to really dig deep into the problem. This can be a painful process for the engineer but it achieves extremely good results.

Knowing When to Stop

Always remember that just because you can do something doesn’t mean you should. Much like writing web software, scaling pipelines is an iterative process and it’s much easier to roll forward than to roll back. Removing all the human touch points too fast will mask problems and likely create more. Automation itself needs to be monitored otherwise you will lose sight of what’s really happening.

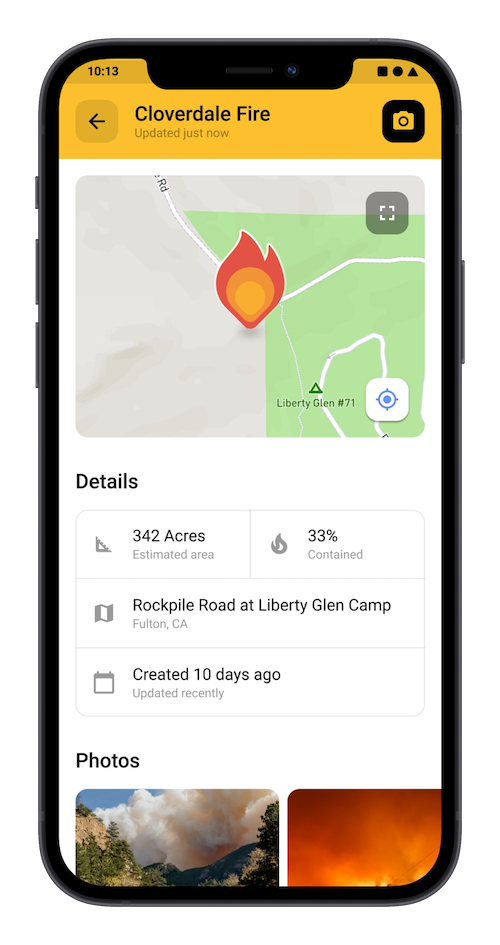

One of the tricks we employ is to automate things to the point of complete automation minus the final step. We do this for quality control purposes in many cases. For example, let’s say you need to gate an approval process part of your system. Rather than automatically approving an event to happen, we send an email to the administrators with the proper information they need to make the decision and several URL links we dub ‘one-click approval links’. This is our way of gating sensitive or otherwise high-risk events that require human attention. This buys us many levels of scale without sacrificing quality. If we hit the next level of scale and still require humans for quality reasons we can easily outsource this for very little cost.

Some pipelines like the one I just described will always require human interaction but other times we do fully automate. When this does happen we have to write even more software to monitor these events. Whether it be audit trails or reports that are emailed to us we need to keep an eye on the gauges. As we scale up it becomes more and more important to have a complete picture as to the status of your pipelines.

Conclusion

There really is no silver bullet to any of these problems and solutions come from careful study and measurement just like scientists would study behavior in lab. Software alone cannot solve all of your logistics problems to scale just as humans will never be able to achieve the same scale without software. Since humans are smarter than machines and will be for the foreseeable future you will need to weld the two together. Finding out how far to push the automation is really based upon your business needs and how closely you study your logistical systems.

My recommendation for anyone who is attempting to scale up their logistical pipelines from shipping product to onboarding data is to use humans and study their behavior closely. It will cost you less up front and in the long term as well as delivering a better more accurate end result. Measure twice and cut once.

With a title like that most people are probably thinking I have some sort of strange fetish — but alas, my sickness is far geekier. I’m talking of course about User Acceptance Testing (UAT).

With a title like that most people are probably thinking I have some sort of strange fetish — but alas, my sickness is far geekier. I’m talking of course about User Acceptance Testing (UAT).